What Is a Unified Social Media API? A 2026 Guide

A unified social media API normalizes data from 21 platforms into one schema. Covers field examples, AI-agent fitness scoring, and build vs. buy. (2026)

Unified Social Media API: The Complete Developer Guide (2026)

A unified social media API is a single endpoint that returns data from every platform in the same schema. This guide covers what that actually means, how unified APIs work under the hood, and how to evaluate one without falling for the "one API, every platform" marketing claim — which almost always means "six platforms, three of which are half-broken."

The problem is concrete. Instagram returns followers_count. TikTok returns follower_count. YouTube returns subscriberCount — and it's a string, not a number. Those are three field names for the same concept across three platforms, and that inconsistency means writing a custom parser for every platform you add. This guide covers field-level normalization, a TypeScript code walkthrough, an AI-agent fitness scorecard, and a neutral build-vs-buy framework so you can decide whether to integrate a unified API or build your own.

Why Do Native Social Media APIs Cost Engineering Weeks?

The integration maintenance burden is not theoretical. Flockler's platform breakdown documents it directly:

| Platform | Auth Method | Rate Limit Behavior | SDK Maintenance |

|---|---|---|---|

| Meta (Instagram / Facebook) | OAuth 2.0 + App Review | Varies by endpoint and permission level | Frequent Graph API versioning |

| TikTok | OAuth 2.0 + App Review + Sandbox | Per-minute sliding window | Mandatory migration on deprecation |

| X (Twitter) | OAuth 2.0 + Paid tiers ($100–$5,000/month) | Strict, tier-dependent | Major v1.1 to v2 migration required |

| YouTube | OAuth 2.0 + API key | 10,000 quota units/day default | Periodic scope changes |

| OAuth 2.0 + Partner program | Varies by product and permission | Restricted API access changes |

Flockler puts the compounding cost plainly: "For a team managing five direct integrations, this translates to five authentication flows, five data parsers, five rate limit handlers, and five separate API documentation sets to monitor."

The Postman 2025 State of the API Report — based on a survey of 5,700+ developers, architects, and executives — adds further context: 69% of developers spend 10+ hours per week on API-related tasks, and 93% of API teams face collaboration blockers caused primarily by inconsistent documentation and definitions. Only 26% use semantic versioning, meaning most teams cannot communicate the impact of API changes to downstream consumers.

For teams building AI-powered products, the gap is sharper: 89% of developers use generative AI in their daily work, but only 24% design APIs with AI agents in mind. "When your API lacks predictable schemas, typed errors, and clear behavioral rules," Postman concludes, "AI agents can't function as they're intended to."

Reducing N integration points to one — without sacrificing platform coverage — is what a unified social media API is built to do. If you want to evaluate specific scraping APIs for your stack, that post covers the commercial landscape. This one covers the underlying concept.

What Is a Unified Social Media API?

A unified social media API is a single endpoint that returns structured data from multiple social platforms in one consistent schema. You make one request, and the response has the same field names whether you queried TikTok, Instagram, YouTube, or any of the other platforms the API covers.

Flockler's definition is a useful starting point: "A unified social media API is a single interface that connects to multiple social platforms and returns content in a standardized format. You make a single API call, and it retrieves posts, images, videos, and metadata from Instagram, TikTok, Facebook, X, YouTube, LinkedIn, and other channels." That definition is accurate for display and aggregation use cases — retrieving social content to embed in a website or social wall.

For data extraction and AI workflows, "unified" means something more specific: field-name parity across every platform, computed enrichment fields that native APIs do not return, and a deterministic schema that does not change shape between calls. The distinction matters in practice, and the section below covers it.

How Does a Unified API Work?

The request flow has three steps.

- Your system sends a single HTTP request with a platform identifier and a handle or resource ID.

- The unified API translates that into the native call for the target platform, handles authentication, and receives the platform-native response.

- The normalization layer maps every platform-native field to the unified schema —

follower_count,followers_count, andsubscriberCountall becomefollowers— then returns the normalized object to your caller.

From your application's perspective, you called one endpoint, passed one API key, and received one response shape. The authentication flows, rate limit handling, and SDK version management happen inside the unified API, not in your code. You can see unified responses in action in the Explorer before writing a single line of integration code.

The additional layer that separates a data-extraction unified API from a simple normalization proxy: computed fields. Native APIs return raw numbers. A unified API built for downstream reasoning can also return derived metrics — engagement_rate, estimated_reach, content_category, and language — that no native platform API calculates for you.

Read APIs vs. Write APIs: What a Unified API Actually Does

Flockler states it clearly in their own FAQ: "The API does not support writing data, such as publishing posts or scheduling content." That sentence is a useful anchor for understanding the two categories of unified social media APIs.

Write-side tools — Flockler, Outstand, Ayrshare, Buffer — are built for aggregation, moderation, cross-posting, and social wall display. They read social content, but their output is visual and their consumer is a website visitor or a moderation queue. If you need to curate and display social content on a website, those tools are the right choice.

Read-side APIs are built for a different output consumer: downstream systems. Data pipelines, analytics engines, AI agents, compliance audits, and research workflows all need structured, normalized data — not a display-ready feed. The table below maps the distinction:

| Dimension | Write-side (e.g., Flockler) | Read-side (e.g., SocialCrawl) |

|---|---|---|

| Primary use | Social walls, moderation, cross-posting | Data pipelines, AI agents, analytics |

| Schema goal | Display-ready content aggregation | Normalized, structured data |

| Output consumer | Website visitors, moderation tools | Downstream systems, AI agents |

| Computed enrichment | Not typically provided | engagement_rate, estimated_reach, content_category, language |

Outstand's listicle of "unified social media APIs" includes Brandwatch (social listening), Hootsuite (scheduling), and Buffer (posting) in the same list as data-extraction APIs. None of them are unified APIs in the read-side sense. The conflation is the source of most confusion when developers search for multi-platform data access. For Instagram data extraction specifically, the read-vs-write distinction determines whether you even get access to the fields your pipeline needs.

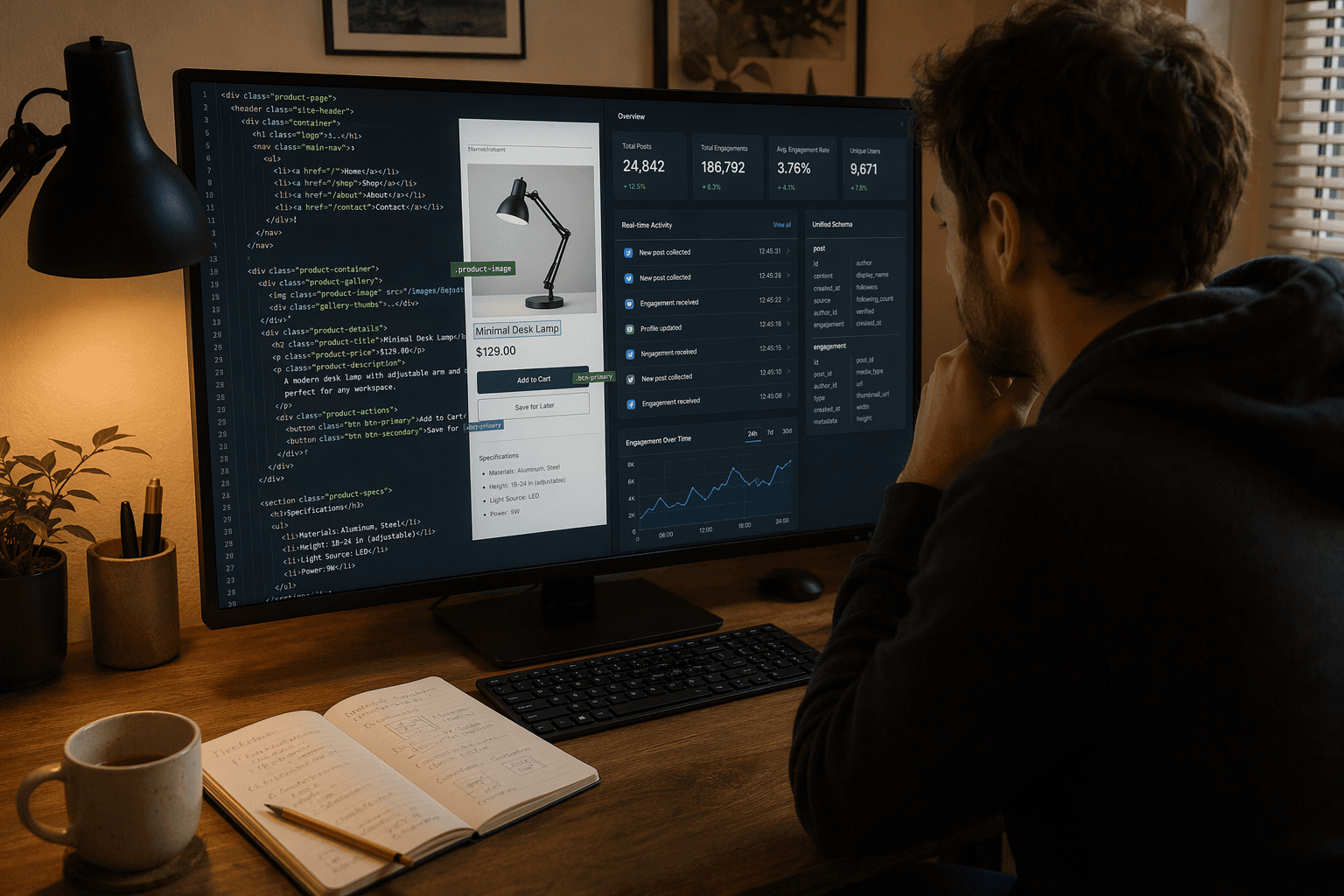

What Does "Unified Schema" Actually Mean? A Field-by-Field Breakdown

Schema normalization is the difference between writing one parser and writing N parsers — one per platform. The table below shows what the same creator looks like across three native APIs versus a unified API response.

Creator: @charlidamelio across TikTok, Instagram, and YouTube

| Field | TikTok Native | Instagram Native | YouTube Native | SocialCrawl Unified |

|---|---|---|---|---|

| Follower count | follower_count: 155000000 | followers_count: 155000000 | subscriberCount: "155000000" (string) | followers: 155000000 (number) |

| Handle | unique_id: "charlidamelio" | username: "charlidamelio" | customUrl: "@charlidamelio" | handle: "charlidamelio" |

| Bio | signature: "..." | biography: "..." | description: "..." | bio: "..." |

| Post count | aweme_count: 2700 | media_count: 2700 | videoCount: "2700" (string) | post_count: 2700 (number) |

| Last updated | create_time: 1711411200 | timestamp: "2024-03-26T..." | publishedAt: "2024-03-26T..." | created_at: "2024-03-26T..." (ISO 8601) |

Three platforms, five fields, eleven different field names across the native APIs — and YouTube returns numeric values as strings, which means your parser needs type coercion just to do arithmetic. SocialCrawl's unified column uses one field name per concept, consistent types, and ISO 8601 timestamps everywhere.

That is what "unified schema" means. Not approximate. Not "roughly the same shape." Exact field-name parity across all 21 platforms, with consistent types.

The second tier of unification is computed fields — these do not exist in any native API:

engagement_rate— calculated from likes, comments, and shares relative to follower countestimated_reach— derived from engagement and follower datacontent_category— AI-classified from post text and medialanguage— detected from post content

These four fields are why "unified" means more than consistent naming — it means data that arrives ready for downstream reasoning without a post-processing pipeline. The full schema reference in the docs covers all normalized fields per platform.

One Integration, Six Platforms: A Code Walkthrough

The field-name differences in the previous section have a concrete consequence: every platform needs its own parsing function. Here is what that looks like when you call three native APIs directly.

Without a unified API — three platforms, three parsers:

// Fetching followers across 3 platforms (abridged — you'd do this 6 times)

const tiktok = await fetch(

`https://open.tiktokapis.com/v2/user/info/?fields=follower_count,display_name`,

{ headers: { Authorization: `Bearer ${tiktokToken}` } },

).then((r) => r.json());

const tiktokFollowers = tiktok.data.user.follower_count; // follower_count

const instagram = await fetch(

`https://graph.facebook.com/v19.0/${igUserId}?fields=followers_count`,

{ headers: { Authorization: `Bearer ${metaToken}` } },

).then((r) => r.json());

const instagramFollowers = instagram.followers_count; // followers_count

const youtube = await fetch(

`https://www.googleapis.com/youtube/v3/channels?part=statistics&id=${channelId}&key=${ytKey}`,

).then((r) => r.json());

const youtubeSubs = Number(youtube.items[0].statistics.subscriberCount); // subscriberCount (and it's a string!)Each platform has a different base URL, different auth header format, different response structure, and different field name for the same concept. Scale that to six platforms — TikTok, Instagram, YouTube, Reddit, LinkedIn, and X — and you have six authentication flows, six parsing functions, and six sets of rate-limit logic to maintain.

With a unified API — six platforms, one parser:

const platforms = [

"tiktok",

"instagram",

"youtube",

"reddit",

"linkedin",

"twitter",

];

const results = await Promise.all(

platforms.map((p) =>

fetch(`https://socialcrawl.dev/v1/${p}/profile?handle=${handle}`, {

headers: { "x-api-key": process.env.SOCIALCRAWL_KEY! },

}).then((r) => r.json()),

),

);

// Same field name everywhere — one parser, six platforms.

const followers = results.map((r) => ({

platform: r.platform,

followers: r.followers,

}));Six platforms in eight lines. One API key, not six OAuth flows. One followers field, not six platform-specific names. One parser that works across the entire result set without a switch statement.

What that removes from your codebase:

- One API key. No per-platform OAuth flows to manage or rotate.

- One response schema. No

if platform === "youtube"coercion blocks. - Four computed fields already present.

engagement_rate,estimated_reach,content_category, andlanguageare in every response — no post-processing pipeline needed.

The full endpoint documentation is in the API reference. Each of the 108 endpoints across 21 platforms returns the same unified schema shape.

How Do You Score a Unified API for AI-Agent Fitness?

Schema consistency is not just a developer convenience problem. It is a reliability problem for AI-agent pipelines.

Agenta's guide to structured outputs and function calling with LLMs describes the failure mode precisely: "A prompt that works perfectly in testing starts failing after a model update. Your JSON parser breaks on unexpected field types. Your application crashes because the LLM decided to rename 'status' to 'current_state' without warning."

That failure mode — inconsistent field names and types — is the exact problem a unified API's deterministic schema solves at the data layer, before any LLM is involved.

Thoughtworks Technology Radar Vol. 33 (November 2025) places "Naive API-to-MCP Conversion" in Hold. The Radar notes: "Organizations are eager to let AI agents interact with existing systems, often by attempting a naive API-to-MCP conversion." Their warning: exposing raw, inconsistent endpoints to agents creates security and reliability risks. An API connected to an MCP toolchain must have consistent schemas, typed responses, and predictable naming before the conversion adds value rather than compounding the chaos.

The Postman 2025 State of the API Report quantifies the gap: 89% of developers use generative AI in daily work; only 24% design APIs with AI agents in mind.

The scorecard below applies five criteria that an API must meet to be reliably callable from an AI agent or MCP tool. These criteria are not proprietary — they follow from what structured-output failures actually look like in production.

| Criterion | What It Means for Agent Reliability | SocialCrawl | Flockler | Native Platform APIs (aggregated) | Proxy-only tools (aggregated) | Score |

|---|---|---|---|---|---|---|

| Consistent field names | followers always means followers, across every platform and every call | ✓ Pass | ✗ Fail — write-side; field names are display-oriented | ✗ Fail — per-platform naming diverges | ✗ Fail — pass-through of native inconsistencies | — |

| Computed fields | Returns engagement_rate, estimated_reach, content_category, language | ✓ Pass | ✗ Fail — no enrichment fields | ✗ Fail — raw platform data only | ✗ Fail — no enrichment layer | — |

| Deterministic schema | Optional fields are always present (null if empty, never absent) | ✓ Pass | Partial — structured but display-focused | ✗ Fail — schema varies by endpoint version | Partial — depends on implementation | — |

| MCP-ready | Can connect to a Claude / MCP toolchain without a custom adapter | ✓ Pass | ✗ Fail — not designed for agent toolchains | ✗ Fail — requires custom MCP adapter per platform | ✗ Fail — raw responses need normalization first | — |

| Rate-limit transparency | Rate-limit headers returned; pagination is cursor-based and predictable | ✓ Pass | Partial — pagination exists but not agent-optimized | ✗ Fail — varies significantly across platforms | Partial — implementation-dependent | — |

| Score | 5/5 | 0–1/5 | 0/5 | 0–1/5 |

A note on honesty: native platform APIs score well on documentation transparency — TikTok, Meta, and YouTube publish detailed rate-limit and versioning guidance in their developer portals (TikTok, Instagram Graph API, YouTube Data API v3). What they cannot offer is cross-platform consistency, because they were never designed for it. Flockler's API is a well-built write-side tool for its intended purpose — the low score reflects an evaluation rubric designed for read-side data extraction, which is a different use case.

An API that fails this scorecard is not necessarily a bad API. It may be excellent for its intended use case. For AI-agent workflows, schema determinism is table stakes — not a bonus. The computed field reference in the docs covers how each enrichment value is derived.

Should You Build Your Own Unified API or Buy One?

Build your own unified API if all of these are true:

- You cover 1–2 platforms and have no near-term plans to expand

- Your schema can be locked in and your data consumers are internal only

- You have 3+ engineers available to maintain it as platforms change

- The platforms you target have stable, low-deprecation-velocity APIs

In practice, those conditions rarely hold simultaneously for teams building data pipelines. Platform APIs change. X moved from v1.1 to v2 and behind a paid tier. Meta Graph API has a documented versioning lifecycle with mandatory migration windows. TikTok's Research API adds and removes endpoints as regulatory and policy requirements shift.

Buy a unified API if any of these are true:

- You need 3+ platforms and cannot afford a per-platform integration backlog

- Schema consistency must survive platform deprecation cycles without code changes on your end

- You need computed fields (

engagement_rate,estimated_reach) without building a post-processing pipeline - Time-to-value is measured in days, not months

The economics are not ambiguous for multi-platform teams. Building and maintaining a five-platform integration is a conservatively [ESTIMATED] 10–20 engineering weeks at typical developer rates — a one-time cost of $30,000–$75,000, before ongoing maintenance as platforms evolve. SocialCrawl's pricing tiers run from £14/month (Starter, 5,000 credits) to £299/month (Pro, 180,000 credits), with a free tier at 100 credits to test the schema before committing. 21 platforms are available from the first API call.

The economics favor "buy" for any team covering 3+ platforms with a delivery timeline under three months. For teams covering 1–2 platforms with strong internal engineering capacity, building is a defensible choice. If you want to compare specific tool options in the commercial landscape, that post covers the field.

What Should Enterprise Teams Know About Social Media Data Scraping at Scale?

Enterprise teams reaching this section are typically not asking "what is a unified API" — they are evaluating whether a unified social media data scraping layer reduces their compliance surface, fits their audit requirements, and scales to production volumes. This section addresses those questions directly.

GDPR Article 30 — data lineage reduction

GDPR Article 30 requires organizations processing personal data to maintain a written record of all processing activities: categories of data, purposes, sources, and recipients.

When a team queries social media data across five or more platforms via direct integrations, each platform integration is a separate processing activity that needs individual documentation. A unified layer that routes all queries through one integration point reduces the Article 30 compliance surface from N platform entries to one — a meaningful simplification for data protection officers managing large processing records.

SOC 2 audit trails and API observability

The Postman 2025 State of the API Report frames the audit problem clearly: "If you can't tell a human from an agent, you can't enforce least privilege, detect abuse, or meet compliance requirements." 51% of developers in the same survey cite unauthorized or excessive API calls from AI agents as their top security concern.

A unified API layer with per-request logging — timestamps, endpoints, response codes, and credit consumption — provides the audit trail that raw platform calls do not. Credit-based billing creates a natural data volume record: credit spend maps directly to query volume, which maps to data processed.

The MCP Dev Summit NYC (April 2026) surveyed 100+ organizations across Fortune 500 manufacturers, global banks, and government contractors. The consistent finding: "Security and governance aren't features on a wish list; they're prerequisites." RBAC, rate limiting, and PII handling were flagged as gating concerns before enterprise teams would expand MCP infrastructure — requirements that a well-designed unified API layer must accommodate.

Data residency and upstream transparency

Procurement teams typically want to know where data comes from and where it is processed. SocialCrawl normalizes and enriches data in-house — the unified schema, computed fields (engagement_rate, estimated_reach, content_category, language), and deterministic response shape are applied at the SocialCrawl layer. For data residency requirements — EU vs. US processing location, contractual sub-processor disclosure — contact the team for enterprise plan details.

Credit budgeting at scale

Standard-tier endpoints cost 1 credit per call. Advanced endpoints (profile analytics, engagement history) cost 5 credits. Premium endpoints cost 10 credits. At the Pro tier (£299/month, 180,000 credits), a team running standard-tier queries can process up to 180,000 calls per month within the plan — Advanced and Premium endpoints consume 5× and 10× credits respectively. Enterprise volumes — 100 million or more monthly queries — are available on custom plans with negotiated pricing and SLA commitments. See the enterprise plan details on the pricing page.

Relationship to legal compliance

This section covers governance and data architecture. The legal dimensions of social media data scraping — CFAA, GDPR Article 6 lawful bases, platform Terms of Service, the hiQ v. LinkedIn precedent — are covered in depth in the legal and compliance implications of social media data scraping guide.

Glossary: Unified API, Aggregator, Scraper, Social Wall

These four terms are often used interchangeably in marketing materials. In practice, they describe different things. The distinction matters for technical buying decisions.

Unified API A unified social media API is a single read endpoint that returns structured data from multiple platforms in one consistent schema. It is not a posting tool, a display widget, or an aggregator. It is an infrastructure primitive for data pipelines and AI workflows. SocialCrawl covers 21 platforms, 108 endpoints, and returns the same field names and types regardless of the source platform.

Aggregator An aggregator (Flockler is a well-built example) combines social content from multiple platforms for display purposes — social walls, embedded feeds, moderated carousels. It reads social content but its output is visual, not structured data for downstream processing. Best for: marketing teams curating social proof on a website. Not a substitute for a read-side data API. See Instagram-specific endpoints for an example of what read-side data coverage looks like.

Web scraper A web scraper extracts data from web pages by parsing HTML, often via headless browsers (Selenium, Playwright, Puppeteer) or HTTP clients. It requires no API key but is fragile, maintenance-heavy, and frequently violates platform Terms of Service in ways that carry legal risk. Best for: single-platform custom workflows or learning projects. Not recommended for production data pipelines at any significant scale.

Social wall service A social wall service (Flockler, SociaVault) is a complete product for moderating, displaying, and sometimes scheduling social content. It sits above the API layer. It may use a unified API internally, but its interface is oriented to non-technical marketing and events teams, not developers building data systems. Best for: events, brand campaigns, and digital signage.

Each category excels in its lane. The distinction matters for technical buying decisions: a social wall is not a substitute for a read API, and a scraper is not a substitute for either.

Explore This Guide with AI

Want to ask an AI about unified social media APIs? Use these pre-filled queries:

Ask ChatGPT · Ask Perplexity · Ask Claude · Ask Gemini · Ask Grok

Frequently Asked Questions

Why use a unified social media API?

Unified APIs reduce multi-platform integration from N parsers to one. Instead of learning separate response formats for each platform's native API, you write one integration and query any of the 21 platforms SocialCrawl covers. The single schema — followers, handle, bio, engagement_rate — eliminates platform-specific adapter code and reduces the maintenance burden when platforms deprecate endpoints or change versioning. For teams covering 3+ platforms, the time-to-value difference is weeks versus months. You can evaluate specific tools before committing.

What is a social media API?

A social media API is a programmatic interface that returns data from a social platform — profiles, posts, engagement metrics, follower counts — in a structured format. Native APIs (Instagram Graph API, TikTok Developer API, YouTube Data API v3) each return different field names and response structures. A unified social media API normalizes those differences: one request, one schema, regardless of which platform you queried.

How do APIs differ from scraping?

APIs are official, sanctioned data access endpoints provided by the platforms themselves. Scraping extracts data by parsing HTML, typically without platform authorization. APIs are faster, more reliable, and legal. Scraping is cheaper to start but fragile, unstable under platform changes, and frequently violates Terms of Service in ways that carry legal exposure. Unified APIs like SocialCrawl use authorized access methods — platform APIs or licensed endpoints — so your data pipeline has a clean provenance chain. The legal and compliance implications of social media scraping are covered in depth in a dedicated guide.

Instagram API vs. scraping: which is better?

For production use, the official Instagram Graph API is more reliable, legal, and stable than scraping — but it is restricted to approved business and creator accounts with explicit Meta App Review. Scraping Instagram directly requires sophisticated techniques and carries a real risk of account bans and Terms of Service liability. A unified API abstracts this complexity: it uses Instagram's official endpoints where available and authorized access methods where the native API scope is limited — all returned in the same schema, so your code does not need to handle the platform-specific access method. See Instagram-specific endpoints for coverage details.

What data can a social media API return?

A unified social media API can return user profiles (handle, followers, bio, verified status, creation date), posts (text content, engagement counts, media URLs, timestamps), comment threads, and computed enrichment fields: engagement_rate, estimated_reach, content_category, and language. SocialCrawl covers 21 platforms across 108 endpoints. Not every endpoint is available on every platform — coverage varies by what each platform's API exposes. The full endpoint documentation lists what is available per platform.

How do I choose a unified social media API?

Apply the AI-Agent Fitness Scorecard from this guide. Ask five questions: Does it normalize field names across platforms? Does it return computed fields? Is the schema deterministic (null when empty, never absent)? Does it support MCP integration? Are rate-limit headers and pagination predictable? SocialCrawl scores 5/5 on this rubric across 21 platforms, with credits starting at £14/month for 5,000 requests. See the pricing tiers for a full breakdown. A free tier at 100 credits lets you verify the schema before committing to a plan.

What are the rate limits and scaling options?

SocialCrawl does not impose per-minute request rate limits in the traditional sense — the credit system governs access instead. The free tier provides 100 credits; Starter (£14/month) provides 5,000; Growth (£49/month) provides 25,000; Pro (£299/month) provides 180,000. Enterprise volumes are available on custom plans. Credits never expire. Responses include standard HTTP headers for cache-control and retry guidance, and pagination for large result sets uses cursor-based navigation for predictable, agent-safe traversal. See pricing for full tier details.

The field-name differences covered in this guide will only grow as new platforms emerge and existing ones revise their API versioning. A unified schema layer absorbs those changes at the normalization layer — your integration code does not need to change when a platform deprecates a field name.

Paste any social profile URL into the Visual Explorer and you will see the normalized response before writing a single line of code. When you are ready to integrate, the SocialCrawl docs cover every endpoint, field, and authentication pattern across all 21 platforms.

Related posts

How to Use Claude Agents: A Developer's Guide to Managed Agents, Sub-agents, and Real-time Data

A developer's guide to Claude Managed Agents, Claude Code sub-agents, and the Messages API. Setup in 4 steps, parallel execution, MCP, rate limits.

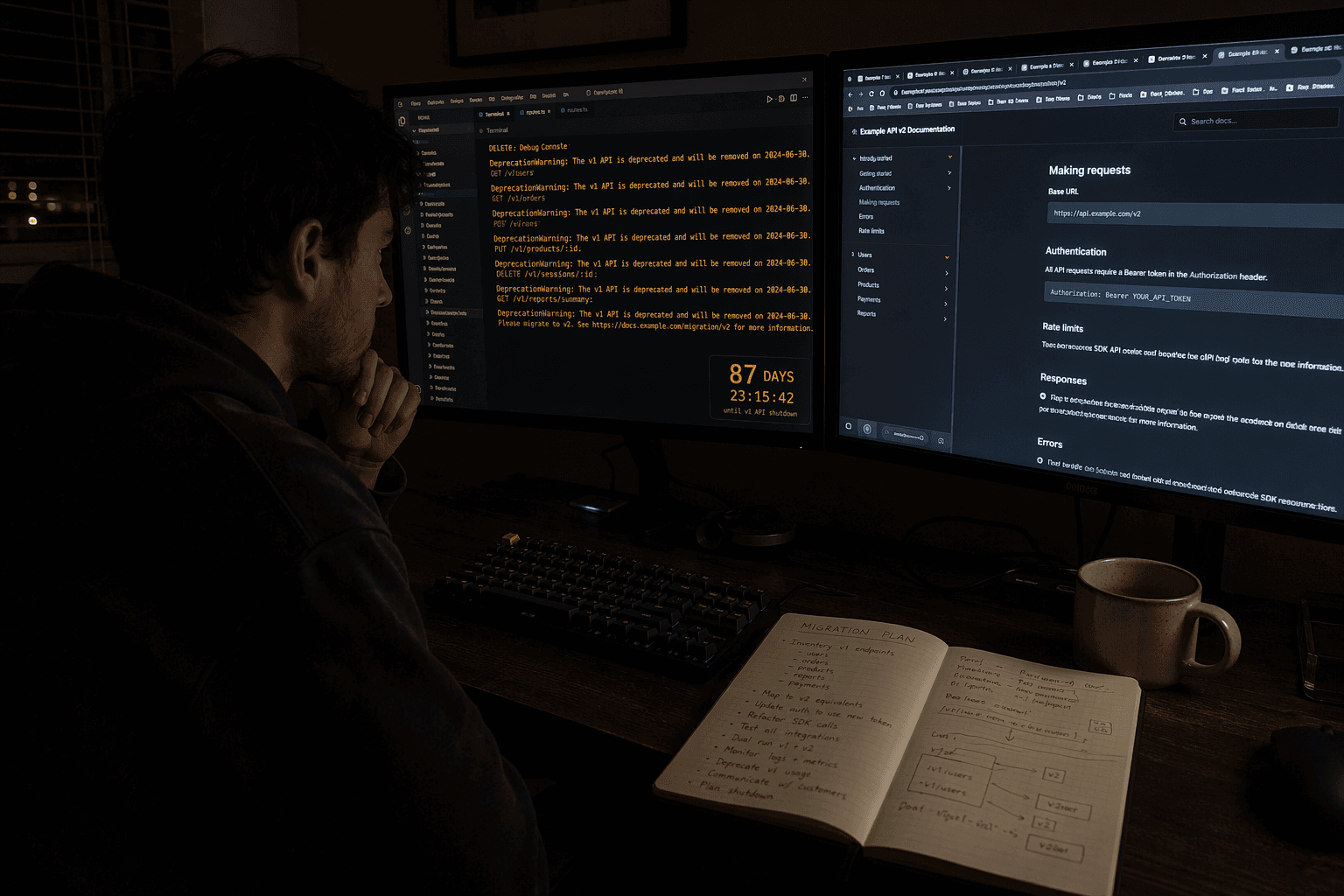

The OpenAI Assistants API in 2026: A Field Guide to the Shutdown, the Migration, and What Comes Next

OpenAI shuts down the Assistants API on 26 August 2026. A practical migration guide: Responses API, costs, frameworks, and the realistic timeline.

Browse AI Web Scraper: Where It Wins, and Where Social Data APIs Fit Better

Browse AI is great for web pages; social platforms need a specialist. Decision guide for developers choosing scrapers vs unified social-data APIs.