10 Best Social Media Scraping APIs in 2026: Ranked & Compared

Compare 10 social media scraping APIs in 2026. Unified schema, pricing, computed fields, AI-agent ready. SocialCrawl vs SociaVault, Apify, Bright Data & more.

The 10 Best Social Media Scraping APIs in 2026: Unified Schema & Pricing Compared

Every social media scraping API promises a "consistent format." Then you call three of them and get back followerCount, fan_count, and edge_followed_by.count — and spend two weeks writing platform-specific adapters for data that should have been identical.

The best social media scraping APIs in 2026 solve that fragmentation at the schema level, not with documentation. These 10 tools range from general-purpose scrapers to purpose-built social APIs to enterprise infrastructure. Instagram now has 3 billion monthly active users (Reuters, Sept 2025). TikTok hit 1.99 billion (DemandSage/Statista, Q4 2025). The data is worth collecting. The question is which API actually makes it usable.

What makes a "best" social media scraping API in 2026?

The rankings below use these criteria — in order of importance for most development use cases:

- Platform coverage — How many platforms with a verified endpoint count, not a vague "25+." Any tool can claim a number; a defensible endpoint count is harder to fake.

- Unified schema — Does

followersmeanfollowerseverywhere, or does your code still need a per-platform adapter? This is the difference between writing one integration and writing ten. - Computed fields — Does the API deliver

engagement_rate,estimated_reach,content_category, andlanguagepre-calculated, or do you run the math yourself? - AI-agent readiness — Can an LLM agent call this API reliably without platform-specific tool definitions? Inconsistent schemas break tool calls.

- Visual Explorer — Can you validate the response shape before writing a single line of integration code?

- Pricing model — Credits that never expire vs. monthly subscriptions that expire at billing cycle end. The TCO difference matters at scale.

- Benchmark success rates — An independent Proxyway October 2025 benchmark tested 11 scraping APIs across 15 protected websites. Only 4 providers exceeded 80% success. Knowing which ones matters before you build.

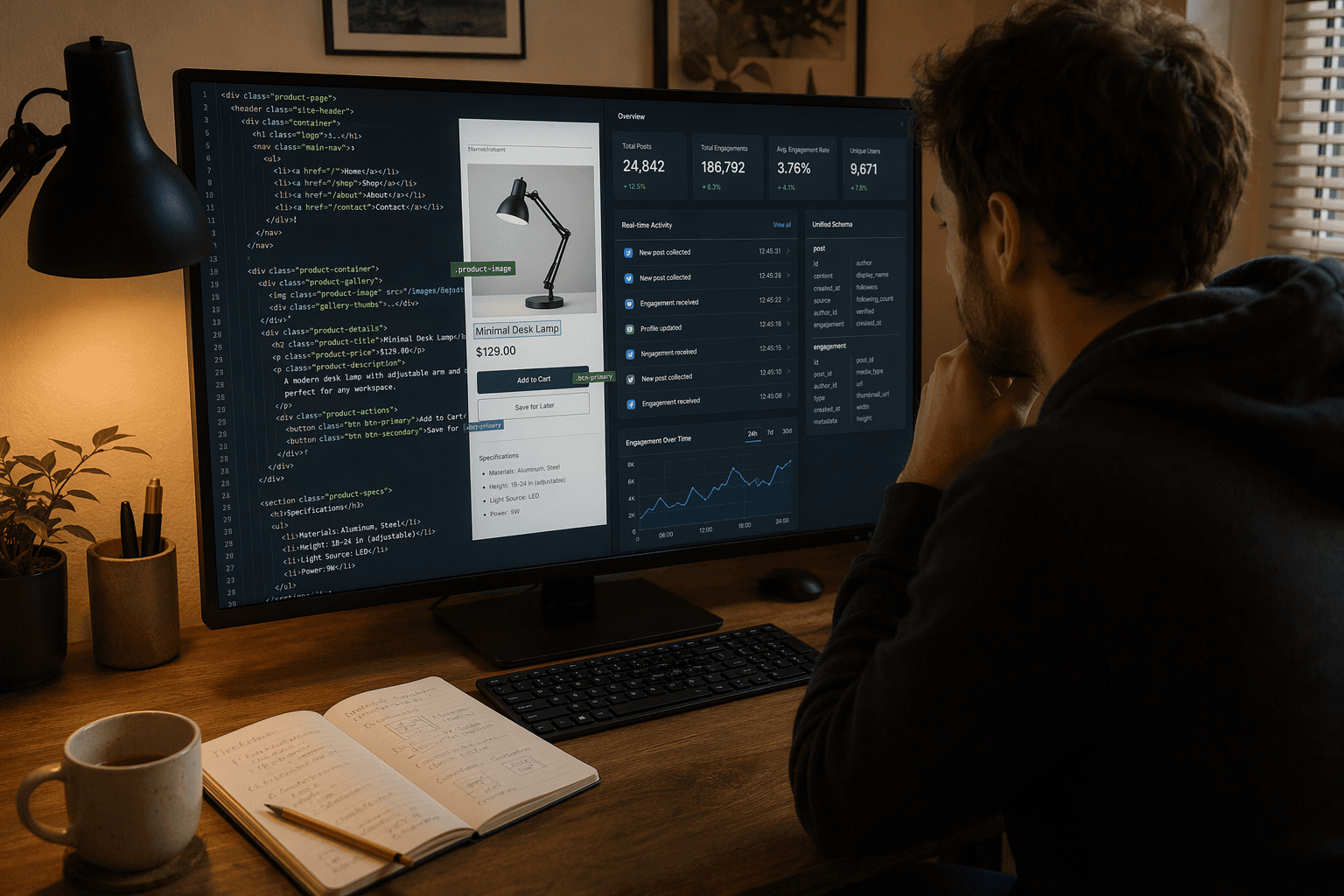

What does a unified schema actually look like? One creator, three platforms, one response

This is the core problem. Not in theory — in the actual JSON your integration receives.

For the same creator across three platforms, three different native API calls return three different field names for the same data:

| Field | TikTok native | Instagram native | YouTube native | SocialCrawl unified |

|---|---|---|---|---|

| Follower count | followerCount: 155000000 | edge_followed_by.count: 155000000 | statistics.subscriberCount: "155000000" | followers: 155000000 |

| Handle | uniqueId: "charlidamelio" | username: "charlidamelio" | customUrl: "@charlidamelio" | handle: "charlidamelio" |

| Bio | signature: "..." | biography: "..." | description: "..." | bio: "..." |

Same creator. Three API responses. Three different field names for follower count, three for handle, three for bio. If your integration calls all three platforms, you write three parsers.

SocialCrawl's unified schema collapses all of that to the right-hand column. followers is followers across all 21 platforms. handle is handle. bio is bio. The normalization extends to every field — including bio_link and external_url variants that differ per platform. On top of that, four computed fields arrive on every response: engagement_rate, estimated_reach, content_category, and language. No platform native API returns these. You'd calculate them yourself, or skip them entirely.

For the full technical explanation of what a unified social media API actually means — how the schema normalization works under the hood, and how to evaluate one — the concept guide covers it in depth.

How do the top social media scraping APIs compare? All 10 tools at a glance

The table below uses the same criteria applied in the rankings. ✓ marks a genuine capability; ✗ marks an absence; — marks unverified or not applicable.

ScraperAPI note: Independent Proxyway benchmark (October 2025) recorded 0% success on Instagram and Twitter endpoints specifically. Average overall: 63.7% vs 58.2% industry average.

SociaVault platform count note: SociaVault's "25+ platforms" figure includes ad libraries (Facebook Ad Library, Google Ad Library, LinkedIn Ad Library), Linktree, Amazon Shop, and TikTok Shop. Head-to-head social platform coverage is 8 platforms. That's still workable for many use cases — just count accurately.

| Tool | Platforms | Endpoints | Unified Schema | Computed Fields | Pricing Model | Starting Price | AI-Agent Ready | Visual Explorer | Free Tier | Code Sample |

|---|---|---|---|---|---|---|---|---|---|---|

| SocialCrawl | 21 | 108 | ✓ followers, handle, bio | engagement_rate, estimated_reach, content_category, language | PAYG credits (never expire) | Free 100cr / £14 for 5K cr | ✓ | ✓ | 100 credits | /docs |

| SociaVault | 8 social (25+ total incl. ad libs) | — | Partial | None | PAYG credits (never expire) | Free 50cr / $29 for 6K cr | Partial | ✗ | 50 credits | sociavault.com |

| Apify | 24,000+ actors | — | ✗ (per-actor) | None | Monthly sub + compute units (expire) | $5 cr/mo | Partial | ✗ | $5/mo credit | apify.com/docs |

| Bright Data | ~8 social | — | Partial | None | PAYG per 1K requests | ~$1.50/1K req | Partial | ✗ | ✗ | brightdata.com |

| ScraperAPI | General (0% IG/Twitter) | — | ✗ | None | Monthly sub (expire) | $49/mo 100K cr | Partial | ✗ | 1,000 credits | scraperapi.com |

| Smartproxy | Social API | — | ✗ | None | PAYG, 23K req min | ~$0.08/1K req† | ✗ | ✗ | ✗ | decodo.com |

| Oxylabs | ~3 social | — | ✗ | None | Enterprise custom | Custom | Partial | ✗ | Trial on request | oxylabs.io |

| ProfileSpider | LinkedIn only | — | — | None | Subscription | [verify] | ✗ | UI (lead gen) | [verify] | profilespider.com |

| Scrapfly | 7 social | — | ✗ | None | Monthly sub (expire) | Free 1K cr / $30 200K cr | Partial | ✗ | 1,000 credits | scrapfly.io/docs |

| RapidAPI | Varies by API | — | ✗ | None | Per-API (varies) | Varies | ✗ | ✗ | Varies | rapidapi.com |

† Smartproxy pricing sourced from Oxylabs competitor analysis — verify on decodo.com before committing.

Which are the 10 best social media scraping APIs in 2026? Ranked

1. SocialCrawl — unified schema across 21 platforms

Best for: developers who need multi-platform social data in a single consistent response shape, and AI agent workflows that can't afford platform-specific adapter logic.

SocialCrawl covers 21 platforms across 108 endpoints. The differentiator isn't the count — it's what the response looks like. Every call returns the same field names regardless of platform. followers is followers on TikTok, Instagram, YouTube, Reddit, LinkedIn, and 16 others. Your parser is written once.

The computed fields are the second thing worth knowing. Every response includes engagement_rate, estimated_reach, content_category, and language. No platform native API delivers these. They're calculated server-side so your integration receives data that's already ready for analysis or AI reasoning, not raw counts that need post-processing.

Here's what a real call looks like:

curl "https://socialcrawl.dev/v1/tiktok/profile?handle=charlidamelio" \

-H "x-api-key: $SOCIALCRAWL_KEY"{

"platform": "tiktok",

"handle": "charlidamelio",

"followers": 155000000,

"bio": "dunkin' ordered for life",

"engagement_rate": 0.042,

"estimated_reach": 6510000,

"content_category": "entertainment",

"language": "en"

}Swap tiktok for instagram, youtube, reddit, or any of the other 21 platforms — the response shape stays identical. Same parser, every platform.

What we like:

- 21 platforms, 108 endpoints — broadest verified coverage in this comparison

- Unified schema means one integration handles every platform

engagement_rate,estimated_reach,content_category,languageon every response- Visual Explorer: paste a URL, see the unified schema response before writing a single line

- 100 free credits that never expire — enough to test across multiple platforms

- MCP skill integration for AI-agent workflows

What to watch:

- Newer entrant — the platform list will grow; some niche platforms are still in the pipeline

- Pricing is in GBP; if your budget is in USD, the conversion adds a mental step

Pricing: Free (100 credits, never expire) → Starter £14/mo (5,000 credits) → Growth £49/mo (25,000 credits) → Pro £299/mo (180,000 credits) → Enterprise

Verdict: The right call if you need multi-platform coverage without writing adapter code, or if you're building anything that feeds into an AI pipeline.

2. SociaVault — simplest pay-as-you-go API for 8 core platforms

Best for: developers who need a handful of mainstream social platforms and want the simplest possible REST API with credits that don't expire.

SociaVault is the closest direct competitor — social-focused, credit-based, pay-as-you-go. The platform count needs unpacking: SociaVault markets "25+ platforms," but that figure includes Facebook Ad Library, Google Ad Library, LinkedIn Ad Library, Linktree, Amazon Shop, and TikTok Shop alongside the social platforms themselves. Head-to-head social coverage is 8 platforms (SociaVault pricing page, verified April 2026). That's still a workable set for many use cases.

What SociaVault does not offer: computed fields (no engagement_rate, no estimated_reach), a unified schema across all platforms, or a visual data explorer. The response shape varies per platform. If you need to call multiple platforms, your code handles the field-name differences.

The pricing is competitive at volume. The Starter plan at $29/6,000 credits works out to $0.0048/credit. SociaVault's credits also never expire — a meaningful edge over tools with monthly rollovers.

For developers who want to understand what a unified social media API actually delivers vs. what SociaVault's partial approach gives you, the concept guide lays out the technical distinction.

What we like: Credits never expire. Low entry cost. Clean REST API. No compute-unit or per-request complexity.

What to watch: 8 social platforms vs 21. No computed fields. Platform-specific response shapes require adapter code per platform.

Pricing: Free (50 credits) → Starter $29/6K cr → Growth $79/20K cr → Pro $199/75K cr → Enterprise $399/200K cr

Verdict: Reasonable choice for 8 core platforms and simple use cases. Know what "25+ platforms" actually counts.

3. Apify — largest scraping actor marketplace

Best for: teams that need 24,000+ custom scraping actors and are comfortable with per-actor schema inconsistency.

Apify is a scraping platform, not a social media API. The distinction matters. Their marketplace has over 24,000 "Actors" — pre-built scrapers maintained by different developers. The TikTok Scraper has 159,000 users. The Instagram Scraper has 229,000. Each actor is independently maintained and returns its own response shape.

The practical result: if you're calling TikTok and Instagram data through Apify, you're working with two different response schemas written by two different developers. There's no platform-level normalization. Apify does have MCP integration in their ecosystem, which makes them more AI-agent compatible than tools with no structured output at all — but schema inconsistency across actors creates unreliable tool calls for any agent that needs to generalize.

Credits expire at the end of each billing cycle, which changes the TCO math for variable-volume workloads. The free tier provides $5/month in credit — minimal for real use.

Enterprise credentials are real: SOC2 certified, GDPR and CCPA compliant, used by T-Mobile, Microsoft, Accenture.

What we like: Largest actor marketplace (24,000+). MCP integration. Enterprise compliance certifications. Open-source Crawlee library (22,809 GitHub stars). The Apify scraper ecosystem for Instagram alone has 229,000 users.

What to watch: No unified schema across actors. Credits expire monthly. Schema consistency depends entirely on which actor you use and who maintains it.

Pricing: Free ($5 cr/mo, expires) → Starter $29/mo → Scale $199/mo → Business $999/mo → Enterprise (custom)

Verdict: Best when you need a specific niche scraper that doesn't exist elsewhere. Accept that schema normalization is your problem, not Apify's.

4. Bright Data — enterprise-scale infrastructure

Best for: high-volume teams that need > 80% benchmark success on difficult targets and have the budget for enterprise minimums.

Bright Data covers approximately 8 social platforms with dedicated scrapers: Instagram, TikTok, Facebook, LinkedIn, YouTube, Twitter/X, Reddit, and Pinterest. The response data is platform-specific — not normalized across platforms — and there are no computed fields on social responses. For teams focused on social media data scraping at enterprise volume, this is the infrastructure-first option in the list.

The reason Bright Data appears this high on the list: benchmark performance. The independent Proxyway October 2025 benchmark tested 11 providers against 15 protected sites at scale. Only four exceeded 80% success rates. Bright Data was one of them.

The legal record matters here. A federal judge ruled against Meta in its January 2024 lawsuit against Bright Data. The court found Bright Data could only violate Meta's Terms of Service if Meta proved Bright Data scraped while logged into a Meta account. Meta failed to prove it. The ruling reinforced that enterprise-grade public-data scraping can survive legal scrutiny when conducted correctly. (Lection.app legal analysis, Jan 7, 2026)

No free tier for social scraping. Enterprise minimums apply. Not self-serve at low volume.

What we like: Top-tier benchmark performance (one of only 4 above 80%). Reinforced legal standing via Meta ruling. Strongest reliability track record at scale.

What to watch: No unified schema. No computed fields. Not self-serve. Pricing requires sales contact — enterprise commitment expected.

Pricing: ~$1.50/1K requests (benchmark data); enterprise contracts required for most social use cases

Verdict: Right tool for high-volume, difficult targets where benchmark reliability justifies the cost and complexity.

5. ScraperAPI — general web scraper with social limitations

Best for: general web scraping and e-commerce data. Not recommended as a primary social media scraping tool.

ScraperAPI averaged 63.7% success across the Proxyway October 2025 benchmark — above the 58.2% industry average overall. On Instagram and Twitter/X specifically, the same benchmark recorded 0% success. For Amazon and Zillow, it works well. For social, those numbers don't support it.

The credit multiplier structure compounds the problem. The base rate is 1 credit per request. LinkedIn costs 30 credits per request. Add JavaScript rendering (+10 credits) and premium proxies (+10 to 30 credits), and an ultra-premium rendered LinkedIn request consumes 75 credits. The $49/month Hobby plan covers only 1,333 requests at that rate — not the 100,000 advertised on the plan page.

There's also the Twitter/X data extraction consideration: the official API's free tier has become extremely limited, and ScraperAPI's 0% Instagram benchmark makes it a difficult choice for any social-first use case. For the full picture on social media scraping legality and ToS risk, see the legal and technical guide.

What we like: Strong on Amazon, Zillow, and general web targets. Simple setup. One API call handles proxy rotation and CAPTCHA. LangChain integration documented.

What to watch: 0% success on Instagram and Twitter specifically (Proxyway Oct 2025). Credits expire monthly. Credit multipliers make the advertised cost misleading for difficult targets.

Pricing: Free (1,000 credits, expires) → Hobby $49/mo (100K cr) → Startup $149/mo (1M cr) → Business $299/mo (3M cr)

Verdict: Fine for general web scraping. For social-specific endpoints — especially Instagram scraper or Twitter/X — the benchmark numbers are a real problem.

6. Smartproxy (formerly Decodo) — proxy-first social scraping

Best for: teams with existing proxy infrastructure who need social media endpoints layered on top.

Smartproxy's Social Media Scraping API is a proxy-first product — the emphasis is on anti-bot bypass and request infrastructure, not normalized data output. Response data is raw HTML/JSON from the target site. There are no computed fields and no unified schema across platforms.

The benchmark result is strong: Smartproxy (Decodo) was one of the four providers that exceeded 80% success in the Proxyway October 2025 benchmark. That's infrastructure reliability, not API ergonomics.

The minimum commitment is 23,000 requests, with pricing starting around $0.08 per 1,000 requests for the Core tier (sourced from Oxylabs competitor analysis, January 2026 — verify current pricing on decodo.com directly). No free tier.

What we like: Top-4 benchmark success rate. Good anti-bot bypass. Solid infrastructure for teams already working at scale.

What to watch: Not a social-data API in the traditional sense. No unified schema. No computed fields. Minimum 23K request commitment. Pricing from competitor source — verify independently.

Pricing: ~$0.08/1K requests (Core tier), 23K request minimum†

Verdict: Makes sense if you're buying infrastructure. Doesn't solve the schema problem.

7. Oxylabs — enterprise reliability, enterprise price

Best for: Fortune 500 teams with the budget for enterprise contracts and a requirement for maximum uptime SLAs.

Oxylabs covers approximately three social platforms with dedicated scrapers: Instagram, TikTok, and LinkedIn. The Web Scraper API is general-purpose. Like Bright Data, it's an enterprise infrastructure product — not a developer-friendly social API with unified schema or computed fields.

The benchmark position is real: Oxylabs was one of the four providers above 80% success in the Proxyway October 2025 benchmark, alongside Zyte, Decodo, and ScrapingBee. That's the honest case for including it here.

Pricing is enterprise-only. No published standard rates. No free tier (a trial is available on request). If you're at startup scale, this isn't the right evaluation.

What we like: Top-4 benchmark success. Enterprise compliance credentials. Proven at Fortune 500 scale.

What to watch: No unified social schema. No computed fields. Pricing requires sales engagement. Minimal self-serve option.

Pricing: Enterprise custom (requires sales contact)

Verdict: Justified for large organizations with strict SLA requirements. Overkill for most developer use cases.

8. ProfileSpider — LinkedIn lead-gen, not a data API

Best for: salespeople and recruiters who need LinkedIn profile extraction via a Chrome extension, not an API.

ProfileSpider is a Chrome extension — it is not an API and cannot be called programmatically. This matters for developers: there's no endpoint to query, no response JSON to parse, no integration to build. The tool is designed for non-technical users extracting LinkedIn profile data for sales or recruiting workflows, with CSV export.

The reason it appears in social scraping comparisons is keyword overlap, not category overlap. If you're a developer building a data pipeline, this is the wrong tool. If you're a recruiter who wants to pull LinkedIn data without writing code, it serves a genuine purpose — but that's a different category.

What we like: Solves a specific LinkedIn lead-gen problem for non-technical users. No API knowledge required.

What to watch: Not an API. Not programmable. LinkedIn only. Subscription pricing — tiers not independently verified; check profilespider.com directly.

Pricing: Subscription (tiers unverified — check site)

Verdict: Wrong category for developers. Useful for recruiters and salespeople who need a UI-based LinkedIn scraper.

9. Scrapfly — strong infrastructure, platform-specific schemas

Best for: developers who want a technically capable scraping platform with strong tooling and don't need cross-platform schema normalization.

Scrapfly has dedicated open-source scrapers for 7 platforms — Instagram, Twitter/X, TikTok, LinkedIn, YouTube, Facebook, and Threads — published on GitHub. The platform serves 55,000+ developers and processes 15 billion+ API requests per month. The Crawlee open-source library has 22,809 GitHub stars.

The limitation for multi-platform use cases: no unified schema. Each platform still returns its own field structure. Scrapfly's LLM-based Extraction API can impose a schema on top of raw data, but that's a separate product with additional cost. The base scraping API returns what the target site returns. Credits expire monthly.

For developers who want to learn how social scraping works at a technical level — proxy rotation, browser fingerprinting, platform difficulty differences — Scrapfly's documentation and blog are genuinely among the best in the category.

What we like: Strong technical documentation. Open-source scrapers for 7 platforms. Large developer community. Daily engine updates (average 5 versions/day).

What to watch: No unified schema across platforms. Credits expire monthly. LLM extraction is a separate product. Social schema normalization is the caller's problem.

Pricing: Free (1,000 credits) → Discovery $30/mo (200K cr) → Pro $100/mo (1M cr) → Startup $250/mo (2.5M cr) → Enterprise $500/mo (5.5M cr)

Verdict: Good choice for YouTube scraper and similar use cases where you're working one platform at a time and want strong infrastructure. Doesn't solve multi-platform schema fragmentation.

10. RapidAPI aggregators — marketplace discovery, not standardization

Best for: early-stage discovery of niche social APIs before committing to a specific vendor.

RapidAPI is a marketplace, not a product. Individual social data APIs listed on the platform are independently built by different providers, with different response schemas, different reliability profiles, and different pricing structures. There's no unified schema across the marketplace by definition.

The value of RapidAPI is discovery — it's a place to find niche social APIs that might not appear in a direct vendor search. It's not a place to build a production-grade integration that requires consistent output. Every API on the marketplace has its own documentation, its own error handling, and its own rate limits.

What we like: Useful for finding niche or platform-specific scrapers early in evaluation. No single-vendor lock-in during exploration.

What to watch: No standardization across listed APIs. No unified schema. Adds an indirection layer. Production reliability depends entirely on the individual API provider, not RapidAPI.

Pricing: Varies per API listed (per-request, monthly subscription, or freemium — depends on vendor)

Verdict: A starting point, not an endpoint. Good for discovery; not what you build a data pipeline on.

Which API fits which workflow?

The short answer — before the bullets:

- Multi-platform + unified schema + AI agents → SocialCrawl (21 platforms, 108 endpoints, one response shape, computed fields included)

- 8 core platforms, simple PAYG, no schema requirements → SociaVault (credits never expire, lowest mental overhead)

- Maximum actor flexibility, custom scrapers for anything → Apify (24,000+ actors; accept per-actor schema variance)

- Enterprise scale, highest benchmark success rates → Bright Data or Oxylabs (two of four providers above 80% on Proxyway benchmark)

- LinkedIn leads via UI, no API → ProfileSpider (different category; not a developer tool)

- Building an AI agent that calls social APIs → SocialCrawl (Visual Explorer: see your data before writing a single line)

One practical note on the "AI agent" scenario: agents that call multi-platform social APIs need consistent field names to write reliable tool definitions. If followers means something different per platform, the agent's tool call breaks. The unified schema is an AI-agent requirement disguised as a convenience feature.

Platform-specific starting points: If you only need one platform, the entry cost is lower. A TikTok scraper or Instagram scraper for profile data can be tested through SocialCrawl's free 100 credits before committing. For a Twitter scraper or Facebook scraper, the same applies — call a single endpoint, check the response shape, then decide whether the unified schema or a single-platform tool fits your volume. A Reddit scraper for community and post data is one of the higher-signal use cases for AI agents, given the text richness of Reddit threads.

For deeper coverage on what each platform endpoint returns, see the platform docs.

7 questions to ask before you buy a social media scraping API

-

How many platforms does it actually cover? Distinguish social platforms from ad libraries, e-commerce shops, and link aggregators. "25+" can mean 8 social platforms plus 17 adjacent services. A defensible number has a corresponding endpoint list you can verify.

-

Does it return a unified response schema or platform-specific field names? The practical test: call TikTok data and Instagram data with the same integration, no per-platform conditional logic. If your code has

if platform == 'instagram': response['edge_followed_by']['count'], you don't have a unified schema. -

Does it include computed fields, or does your code run the math?

engagement_rate,estimated_reach,content_category, andlanguageare computed fields you'd calculate from raw counts. Receiving them pre-calculated cuts post-processing work — and it's the difference between a scraper and an analysis-ready API. -

Do credits expire? SocialCrawl and SociaVault credits never expire. Apify and ScraperAPI credits reset at the monthly billing cycle. For teams with variable-volume workloads, monthly expiration changes the real cost significantly — unused credits are lost.

-

Can you preview data without writing code first? The SocialCrawl Visual Explorer lets you paste a URL and see the unified schema response immediately. Most tools require: sign up → generate API key → write code → parse response → debug. That sequence adds friction before you know if the response shape fits your use case.

-

What are the actual benchmark success rates on Instagram and Twitter? Ask for this number before building. The Proxyway October 2025 benchmark found that one major provider in this comparison averaged 0% on both endpoints. That's not a rounding error — it means the tool doesn't work for those platforms. Know this before you're three weeks into an integration.

-

Is it AI-agent ready? A consistent schema is necessary but not sufficient. MCP integration, deterministic response shapes, and transparent rate-limit handling all matter when an agent needs to retry, paginate, or chain calls. See the social media scraping legal and technical guide for compliance questions in this context.

Explore this post with AI

Run the comparison in one of these engines:

Ask ChatGPT · Search Perplexity · Ask Claude · Ask Gemini · Ask Grok

Frequently asked questions

What is social media scraping?

Social media scraping is the automated extraction of public data from social platforms — posts, profiles, follower counts, engagement metrics — using web scrapers or APIs instead of manual collection. A scraping API handles the platform-specific complexity (anti-bot measures, rate limits, JavaScript rendering) and returns clean structured data. The alternative is building your own scraper per platform, which means maintaining it every time TikTok changes its frontend or Instagram updates its Graph API schema. For the technical and legal breakdown, see the social media scraping legal and technical guide.

How does social media scraping work?

Scrapers send HTTP requests to social platforms, parse the HTML or JSON response, and extract structured fields. API-based tools like SocialCrawl handle proxy rotation, browser fingerprinting bypass, and rate limiting automatically — you call one endpoint and get back clean JSON. A unified API goes further: it normalizes response shapes so followers is followers regardless of which platform returned the data, which means your API reference integration code doesn't branch per platform.

What are the best social media scraping APIs?

SocialCrawl leads for unified schema across 21 platforms, with computed fields (engagement_rate, estimated_reach, content_category, language) on every response. SociaVault is the simplest option for 8 core platforms at low volume. Apify excels for custom actors when you need a scraper that doesn't exist as a managed API. Bright Data and Oxylabs for enterprise scale with verified benchmark reliability. ScraperAPI for general web scraping — but note its 0% success rate on Instagram and Twitter specifically (Proxyway October 2025 benchmark). Try the Visual Explorer to test response shape before committing to an integration.

Why use a unified social media API?

A unified API eliminates the platform fragmentation that makes multi-platform integrations expensive to build and maintain. Instead of writing ten response parsers — one for TikTok's followerCount, one for Instagram's edge_followed_by.count, one for YouTube's statistics.subscriberCount — you write one integration that handles all platforms. SocialCrawl's unified schema returns followers everywhere across all 21 platforms. For AI agents especially, this removes the need for platform-specific tool definitions — a single tool call shape works for any platform in the dataset. The complete guide to unified APIs covers the technical architecture in detail.

What are the best social media scraping tools?

The best tool depends on the use case. Multi-platform coverage with a consistent response schema: SocialCrawl (21 platforms, 108 endpoints, unified schema, computed fields). Custom actors and maximum flexibility: Apify (24,000+ actors, MCP integration). Enterprise reliability: Bright Data or Oxylabs (both in the top-4 Proxyway benchmark performers). The simplest PAYG API across 8 platforms: SociaVault. LinkedIn lead-gen without API knowledge: ProfileSpider — though that's a different category entirely. The comparison table above covers all ten options with verified numbers.

Are free social media scraping tools worth using?

For small projects and integration testing, yes. SocialCrawl's 100 free credits never expire — enough to call the Visual Explorer across multiple platforms and validate the response shape before writing integration code. Scrapfly offers 1,000 free credits, which is a meaningful testing window. Open-source options (Playwright, Selenium, requests) work but require you to maintain proxy rotation, rate-limit handling, and per-platform parsers yourself. For anything production-grade, the maintenance overhead of a DIY scraper typically exceeds the cost of a managed API within a few months. See pricing details for the free tier breakdown.

What do I need to know about social media scraping compliance?

Always check the platform's Terms of Service first. The key legal precedent: the Ninth Circuit's April 2022 ruling in hiQ v. LinkedIn established that scraping publicly accessible data likely does not violate the Computer Fraud and Abuse Act — "without authorization" applies to systems where access is restricted, not to publicly visible data. That ruling still stands. The December 2022 settlement in which hiQ paid $500,000 and agreed to destroy scraped data stemmed from their use of fake accounts to access non-public data — not from public scraping itself. (Lection.app legal analysis, Jan 7, 2026) GDPR applies to EU citizen data regardless of public visibility; processing it for commercial purposes requires a lawful basis under Article 6. For a full jurisdiction-by-jurisdiction analysis, see the social media scraping legal and technical guide.

If you need multi-platform social data without adapter code, SocialCrawl's unified schema, computed fields, and Visual Explorer are three things no single competitor in this list matches together. The fastest way to verify that claim is to paste a social URL into the Explorer and see the unified response before writing a line of integration code. If you want to understand what a unified social media API means at the architecture level — or if you want the full picture on scraping legality and compliance before building — both guides cover the ground this post pointed to.

Related posts

How to Use Claude Agents: A Developer's Guide to Managed Agents, Sub-agents, and Real-time Data

A developer's guide to Claude Managed Agents, Claude Code sub-agents, and the Messages API. Setup in 4 steps, parallel execution, MCP, rate limits.

The OpenAI Assistants API in 2026: A Field Guide to the Shutdown, the Migration, and What Comes Next

OpenAI shuts down the Assistants API on 26 August 2026. A practical migration guide: Responses API, costs, frameworks, and the realistic timeline.

Browse AI Web Scraper: Where It Wins, and Where Social Data APIs Fit Better

Browse AI is great for web pages; social platforms need a specialist. Decision guide for developers choosing scrapers vs unified social-data APIs.