Social Media Scraping in 2026: Legal & Technical Primer

Scraping social media is legal in the US post-hiQ. This guide covers 7 platforms, 4 jurisdictions, GDPR/CCPA, rate-limit ethics, and technical trade-offs.

Social Media Scraping in 2026: Legal, Technical, and Ethical Primer

Social media scraping — the automated extraction of public data from social platforms — is not one problem. Scraping TikTok is not the same problem as scraping LinkedIn, which is not the same problem as scraping YouTube. This guide covers the legal ground, the technical trade-offs per platform, and how to pick an approach without burning a sprint on infrastructure you'll throw away.

Post-hiQ, the CFAA question in the US is settled. But the GDPR question isn't. The ToS question isn't. The rate-limit ethics question isn't. And the honest case for when DIY scraping stops making financial sense — that one's usually missing entirely. This guide covers all of them, with citations.

This is not legal advice. The analysis below is a summary of publicly available case law and regulation. Consult a lawyer for your specific situation.

Key Takeaways

- Social media scraping is legal in the US for publicly accessible data after hiQ v. LinkedIn — the Ninth Circuit ruled the CFAA does not apply. But hiQ Labs the company paid a $500,000 settlement, destroyed all scraped data, and is effectively defunct. The precedent stands; the plaintiff didn't. (Lection.app)

- Platform Terms of Service create a parallel civil liability that CFAA rulings do not touch. Instagram, LinkedIn, and TikTok explicitly ban automated access. Reddit explicitly permits it. This is the actual risk differential — not CFAA.

- GDPR treats any scraper processing EU residents' data as a data controller — "publicly available" is not a lawful basis. Article 6 requires a documented legitimate-interest balancing test; Article 30 requires Records of Processing Activities. Both apply regardless of whether the source data was publicly posted.

- The CCPA "publicly available" exemption (§ 1798.140(v)(2)) covers government records only — not social media posts. Public Instagram profiles are not exempt. Most guides get this wrong.

- Rate-limit ethics is not just etiquette — overwhelming a server can constitute trespass to chattels under common law, independent of CFAA and ToS. Polite defaults (~1 req/sec, honor

Retry-Afterheaders) keep you inside the safety zone.

What Is Social Media Scraping?

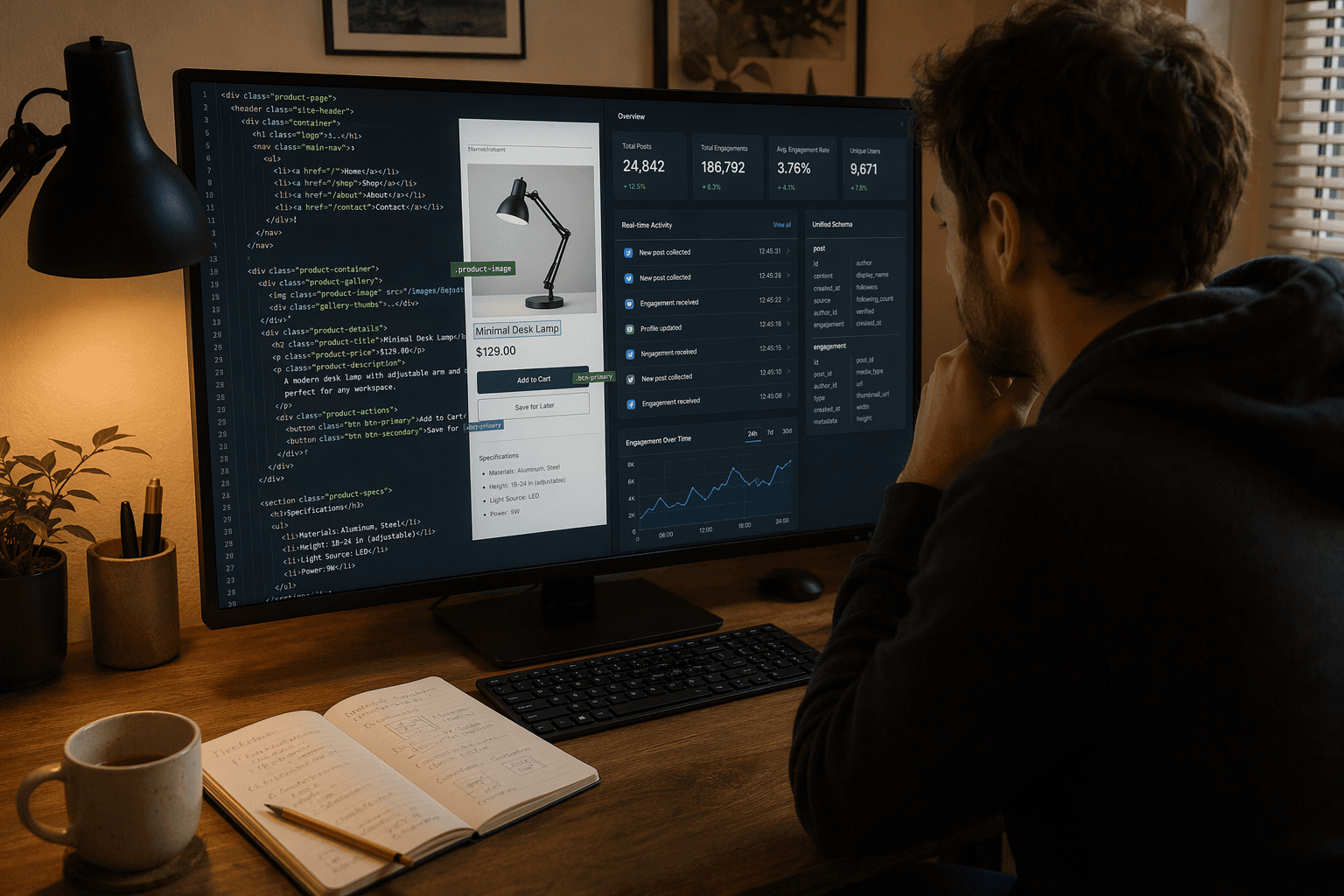

Social media scraping is the automated extraction of public data — posts, profiles, follower counts, engagement metrics, timestamps — from social platforms without the platform's explicit permission, typically using bots, HTTP clients, or headless browsers instead of official API access.

Three things it is not:

Official platform APIs are sanctioned, rate-limited, platform-controlled data channels. Instagram's Graph API, Twitter's v2 API, YouTube's Data API — these are authorized access routes. They return specific fields at specific quotas. They are legal by definition.

Data aggregators ingest from multiple platforms and re-expose data — Flockler, for example, is read/write for content curation. They operate under platform agreements.

Unified APIs — like SocialCrawl — use authorized API access and normalize the response into a single schema across 21 platforms. This is not scraping in the legal sense; it's authorized access with schema transformation on top.

Scraping sits outside all three. It's HTTP requests (or headless browser sessions) aimed at platform endpoints without platform authorization. The data you can pull includes usernames, follower counts, post text, engagement metrics, timestamps, and in many cases structured metadata like geolocation or hashtags — whatever the public-facing HTML or JSON exposes.

Use cases: competitive intelligence, brand monitoring, academic research, AI training data, social analytics. The use case doesn't change the legal status — the method and the jurisdiction do.

How Does Social Media Scraping Work?

Scrapers send HTTP requests to platform endpoints, parse the HTML or JSON response, extract structured fields, then store or forward the data. That's the whole flow:

HTTP request → platform endpoint (or headless browser render)

↓

HTML / JSON response received

↓

Parser extracts fields (post text, author, follower count, engagement)

↓

Structured data → storage / downstream system

The practical split is JavaScript rendering. Reddit and older Twitter endpoints return clean JSON from plain HTTP calls. Instagram and TikTok render their content client-side — you get an empty shell from a plain requests.get(). That means headless browsers: Puppeteer (Node), Playwright (multi-language), or Selenium (Python).

Here's the simplest honest example — Reddit, because it's the platform where scraping is explicitly permitted and where plain HTTP actually works:

import time

import requests

# Reddit allows public scraping via its JSON API

# Rate limit: 1 request per second (respectful baseline)

url = "https://www.reddit.com/r/python/top.json?t=week&limit=25"

headers = {

"User-Agent": "MyResearchBot/1.0 (contact: you@example.com)"

}

response = requests.get(url, headers=headers, timeout=10)

response.raise_for_status()

data = response.json()

for post in data["data"]["children"]:

item = post["data"]

print(item["title"], "— score:", item["score"])

time.sleep(1) # polite: 1 req/sec ceilingSee the full code library for platform-specific examples, including headless browser patterns for Instagram and TikTok.

The honest trade-off: this approach runs at 1–10 req/sec, breaks when HTML structure changes, and is detectable. On Reddit that's fine — on TikTok, the production half-life of a working scraper is roughly 4–6 weeks before fingerprinting catches up and breaks it. That's the engineering reality, and it shapes every downstream decision in this guide.

Is Social Media Scraping Legal in 2026?

In the US, scraping publicly accessible data is legal — the Computer Fraud and Abuse Act does not apply. But platform Terms of Service create a parallel civil liability, and GDPR/CCPA apply in the EU and California regardless of CFAA status.

The three-layer legal framework:

-

CFAA (US criminal and civil law) — Does NOT apply to publicly accessible data post-hiQ Labs v. LinkedIn (Ninth Circuit, 2022), reinforced by Van Buren v. United States (Supreme Court, 2021). No login required = no unauthorized access = no CFAA liability. This is settled in the Ninth Circuit.

-

Terms of Service (civil contract law) — Does apply. Violating ToS is a breach of contract, not a criminal charge. But platforms sue on it — LinkedIn sued hiQ and won a $500K settlement even after losing on CFAA grounds. The financial exposure is real.

-

Data protection law (GDPR, CCPA) — Jurisdiction-specific, applies to how you store and use the data even when collection is legal. A US scraper pulling EU residents' public Instagram data is still a GDPR data controller.

For a full platform-by-platform breakdown, see the 7×4 matrix in the next section. For regional compliance guidance, see /docs/compliance.

The hiQ Case: What Actually Changed (and What Didn't)

The hiQ v. LinkedIn timeline is probably the most misrepresented legal narrative in scraping writing. Here's what actually happened.

April 2022 — The Ninth Circuit ruling (the win): The court reaffirmed that scraping publicly accessible data likely does not violate the CFAA. Key quote from the opinion: "Van Buren reinforces our conclusion that the concept of 'without authorization' does not apply to public websites." (Ninth Circuit, April 18, 2022) This ruling is binding in the Ninth Circuit — California, Oregon, Washington, Arizona, Nevada, Idaho, Montana, Alaska, Hawaii.

What the ruling established:

- CFAA does not apply to public-data scraping (no login required)

- Van Buren v. United States (Supreme Court, 2021) narrowed CFAA interpretation to support this reading

- Public-data scrapers cannot be prosecuted under the CFAA in the Ninth Circuit

What the ruling did NOT establish:

- ToS compliance — platforms can still sue for breach of contract

- GDPR/CCPA compliance — those are entirely separate legal frameworks

- Permission to use fake accounts or bypass authentication to access non-public data

November–December 2022 — The settlement (the loss): The district court found hiQ had also used fake accounts to access non-public data — and hiQ stipulated to CFAA liability for that conduct in the settlement. In December 2022, hiQ paid $500,000, agreed to destroy all scraped data and source code, and was permanently enjoined from scraping LinkedIn. (Lection.app, Jan 7, 2026) hiQ Labs is effectively defunct.

The settlement creates zero precedent — it's a private agreement. The Ninth Circuit ruling stands. But the company that won the ruling did not survive to benefit from it.

What competing guides get wrong: As of April 2026, most technical primers still describe hiQ as a clean win for scrapers. The settlement record shows it was a Pyrrhic outcome — legal fees alone likely exceeded the business value, the data was destroyed, and the company folded. The ruling is good law. The story isn't a victory narrative.

January 2024 — Meta v. Bright Data: A federal judge ruled against Meta. The court found Bright Data could only violate Meta's ToS if Meta proved scraping occurred while logged into a Meta account. Meta failed to prove this. The public-data principle was reinforced: data accessible without authentication is harder to attack legally. (Lection.app, Jenner & Block analysis)

Platform-by-Platform Legal Status: 7 Platforms × 4 Jurisdictions

This is the matrix no competitor publishes at this resolution. Each cell shows: ToS stance, CFAA posture, primary data-protection framework, and enforcement risk.

| Platform | US — CFAA / ToS | EU — GDPR + EU Data Act | UK — Post-Brexit GDPR | Other (CA CCPA / Canada PIPEDA) | Enforcement Risk |

|---|---|---|---|---|---|

| ToS §4.2 explicitly bans automated access "without express permission" (Instagram ToS). CFAA: clear (public data). Contract risk: HIGH. Meta enforces aggressively — IP blocks, fingerprinting, rate-throttle, and a dedicated restriction notice. | GDPR Art. 6 lawful basis required; dual ToS + GDPR liability; VERY HIGH. | GDPR-equivalent; ICO active; HIGH. | CCPA applies to CA residents' data; PIPEDA in Canada; MEDIUM. | Red: Do not scrape for production. Use Instagram Graph API or authorized partner. | |

| TikTok | ToS prohibits automated collection (verbatim ban confirmed by secondary analysis; primary source at TikTok ToS). CFAA: clear. Contract risk: HIGH. Device-ID fingerprinting and headless-browser detection are sophisticated. US ban pressure (CFIUS, 2024–2025) creates additional uncertainty. | GDPR + DSA obligations (169M EU MAU reported Jan–Jun 2025 under DSA Art. 24(2)); CRITICAL. | ICO scrutiny; VERY HIGH. | CCPA applies; MEDIUM-HIGH. | Red: High detection + political uncertainty. Not recommended for production pipelines. |

| YouTube | ToS §3 permits automated access for "public search engines, in accordance with YouTube's robots.txt file" (YouTube ToS). YouTube is the only platform that gives robots.txt compliance contractual weight. CFAA: clear. Contract risk: LOW (but scraping is inefficient post-2024 JS changes). | GDPR + EU Data Act; official API recommended; MEDIUM. | MEDIUM. | LOW–MEDIUM. | Amber: Use YouTube Data API. Scraping metadata is slow and fragile. See YouTube platform page. |

| hiQ ruling: public-data scraping ≠ CFAA violation. ToS explicitly bans automated access (LinkedIn User Agreement). Contract risk: MEDIUM-HIGH. LinkedIn sues — the hiQ settlement is proof. | GDPR + EU Data Act; VERY HIGH. | HIGH. | PIPEDA in Canada; MEDIUM. | Amber: Legal but dangerous. LinkedIn spent years litigating hiQ. Use official API or authorized partners. | |

| Twitter/X | ToS (updated April 10, 2026) explicitly names "scrape" in its prohibitions. CFAA: clear. Official API tiers exist (Basic free, paid tiers). Contract risk: MEDIUM for unauthorized scraping; LOW using official API. | GDPR + EU Data Act; MEDIUM-HIGH. | MEDIUM. | LOW–MEDIUM. | Amber: Use the official API. Unauthorized scraping is explicitly named in ToS. See Twitter/X platform page. |

Reddit explicitly permits public scraping via JSON feeds (/r/[subreddit].json). Rate limits apply. Contract risk: VERY LOW (unique among major platforms). 2023 API pricing ($0.24/1K calls beyond free tier) affected third-party apps, not public scraping. | GDPR applies; Reddit's permissive stance simplifies compliance but doesn't eliminate controller obligations; LOW–MEDIUM. | LOW. | VERY LOW — explicit platform permission. | Green: Scrape respectfully. Reddit is the ethical reference case. See Reddit platform page. | |

| ToS §3.2 (effective March 4, 2026) bans automated collection without permission and explicitly "reserve[s] all rights against text and data mining" — a new March 2026 addition. CFAA: clear. Contract risk: LOW–MEDIUM (weak historical enforcement vs. Instagram). | GDPR + EU Data Act; MEDIUM-HIGH. | MEDIUM. | LOW. | Amber: Low enforcement but GDPR exposure. Official Graph API preferred. See Facebook platform page. |

Three post-table callouts:

The YouTube robots.txt carve-out. YouTube is the only platform that explicitly grants robots.txt compliance as a ToS carve-out for public search engines. This gives the W3C Robots Exclusion Standard contractual weight specifically on YouTube — no other major platform extends this. Respecting YouTube's robots.txt is a ToS requirement, not just a convention.

The CCPA "publicly available" myth. California Civil Code § 1798.140(v)(2)'s exemption covers information "lawfully made available from federal, state, or local government records." Public Instagram profiles are not government records. This exemption does not apply to scraped social media data. It is one of the most common CCPA misunderstandings in technical writing on this topic.

EU Data Act watch (Regulation 2023/2854, applicable from September 12, 2025). The Act governs IoT/connected-device data — not social media content directly. But its Database Directive review provision weakens the sui generis database-right defense that scrapers historically relied on when building competing databases of platform data. It does not require platforms to share user data with scrapers. "Watch this space" is the right framing for 2026 — finalization of platform-specific provisions could create research scraping carve-outs, but is not confirmed. (EUR-Lex)

What GDPR, CCPA, and the EU Data Act Say About Scraped Data

GDPR: Lawful Basis and Data Controller Status

Any scraper processing EU residents' data is a data controller under GDPR — regardless of whether the source data is public. "Publicly available" is not a GDPR lawful basis.

Article 6 requires one of six lawful bases. For commercial scraping, the typical claim is Article 6(1)(f): legitimate interests. But legitimate interest requires a documented balancing test — is scraping necessary? Is the privacy impact proportionate to the purpose? Commercial competitive scraping is unlikely to pass cleanly. Academic research and press-freedom scraping have stronger cases.

Article 30 requires Records of Processing Activities (RoPA) for any controller operating at commercial scale. For a scraper, that means documenting:

- Categories of data subjects (e.g., public Instagram profile holders)

- Categories of personal data collected (usernames, bios, follower counts, post content)

- Purposes of processing

- Categories of recipients

- Retention periods and security measures

The CPPA Enforcement Advisory No. 2024-01 (California Privacy Protection Agency, April 2, 2024) reinforces the data minimization principle: "A business' collection, use, retention, and sharing of a consumer's personal information shall be reasonably necessary and proportionate to achieve the purposes." (CPPA Advisory 2024-01) Scraping full profiles when you only need engagement rate fails minimization under both GDPR Art. 5(1)(c) and CCPA.

Penalty exposure: €20M or 4% of annual global revenue, whichever is higher. Applies extraterritorially — a US scraper processing EU residents' data is subject to GDPR. (GDPR Article 6, EUR-Lex)

For a GDPR data processing agreement template and regional checklist, see /docs/compliance.

CCPA: The "Publicly Available" Myth

Social media profiles qualify as "personal information" under California Civil Code § 1798.140(v) — they can be linked to a California resident.

The § 1798.140(v)(2) exemption applies only to government records. Not social posts. Not public bios. Not public follower counts.

The California Privacy Rights Act (CPRA, 2023) added data minimization requirements and expanded sensitivity categories — it's closer to GDPR-lite than the original CCPA. Opt-out obligations and data deletion rights apply. Active enforcement: in 2024, California AG Rob Bonta announced an investigative sweep targeting businesses selling or sharing consumer personal information, confirming the AG's broad interpretation of what constitutes personal data.

EU Data Act 2025–2026: What It Actually Covers

Regulation 2023/2854 entered into force January 11, 2024, applicable from September 12, 2025.

The Act governs IoT/connected-device data. It does NOT require social media platforms to share user content with scrapers. What it does affect is the sui generis database right under the Database Directive (96/9/EC): the Data Act limits this right for IoT-derived data, weakening one defensive argument scrapers have used when challenged on building competing databases.

The "watch this space" framing for 2026: if finalized provisions extend to dominant digital platforms, research scraping rights may be carved out — but this is not confirmed and should not be planned around. (EC Digital Strategy)

The Technical Playbook: Proxies, Rate Limits, and Headless Browsers

Proxies

IP-based blocking is the primary platform defense. Scrapers rotate through residential or data-center proxy pools to avoid bans.

Residential proxies are more convincing — they look like real users. Cost: $8–15/GB for quality residential pools. Some residential proxy networks source IPs from compromised home machines — ethically and legally gray, with potential botnet exposure. Data-center proxies are cheaper and more detectable but cleaner ethically.

The honest math: proxy costs compound fast. At 100K requests/month averaging 200KB per response, that's 20GB — $160–300/month in proxy costs alone, before engineering time.

Headless Browsers

Instagram and TikTok render content client-side. A plain requests.get() returns a JavaScript shell. You need a headless browser to execute the JS and extract rendered content.

Tools: Puppeteer (Node/JS), Playwright (Python, Node, .NET, Java), Selenium (Python, Java).

The honest cost: headless browsers are 10× slower than raw HTTP. Not optional for JS-heavy platforms — but not free either.

When you don't need them: Reddit (public JSON API), Twitter (official API tier available).

TLS Fingerprinting and Detection

Modern platforms track more than IP addresses. TikTok's detection stack traces:

- Browser TLS fingerprint (cipher suite order, extensions)

- Canvas fingerprint (GPU-based rendering signature)

- WebGL fingerprint

- Timezone/language/resolution combos

- Request header ordering and values

TikTok's fingerprinting is sophisticated enough to break most scrapers within 4–6 weeks of deployment. Budgeting for constant maintenance or skipping it entirely are equally valid engineering decisions — the third option (building a durable TikTok scraper) is expensive and fragile.

Python Rate-Limit Pattern

Here's a more complete polite-scraper skeleton — with explicit rate-limit sleep, jitter to avoid thundering-herd patterns, and a declared User-Agent:

import time

import random

import httpx

RATE = 1.0 # 1 req/sec ceiling — polite default for public-interest use

UA = "MyResearchBot/1.0 (+https://your-site.example/robots)"

def fetch(url: str) -> str:

r = httpx.get(url, headers={"User-Agent": UA}, timeout=10)

r.raise_for_status()

return r.text

urls = [

"https://www.reddit.com/r/python/top.json?t=week&limit=25",

# add more public endpoints here

]

for u in urls:

page = fetch(u)

# parse page …

time.sleep(RATE + random.uniform(0, 0.5)) # jitter avoids thundering-herd patternsOr you skip all of this and call an API that's already handling rate limits, proxies, and TLS fingerprinting for you — see the scraping API comparison and the unified social media API guide.

Rate-Limit Ethics: A First-Principles Framework

"Damaging systems: Overwhelming servers with requests can constitute trespass to chattels." — Lection.app

Rate-limit ethics has three layers: technical, contractual, and tort. Most scraping guides cover the first two. The tort layer is what most teams don't know until they're facing a lawsuit.

Technical ethics — don't get blocked. The 1 req/sec baseline isn't arbitrary — it mirrors common crawl practices from reference implementations like Common Crawl's open dataset. Honor Retry-After headers. Use exponential backoff: on a 429 response, wait 1s, then 2s, then 4s, then back off entirely. Not: retry immediately until blocked (hammer-and-pray). Parse X-RateLimit-Remaining and X-RateLimit-Reset — scrapers that ignore these get blocked; scrapers that honor them don't.

Contractual ethics — respect robots.txt where it has contract force. As established above, YouTube's ToS is the only one that gives robots.txt compliance contractual weight. Elsewhere robots.txt is a convention, not a binding obligation — but it signals intent and courts have weighed it.

Tort ethics — avoid trespass-to-chattels exposure. Courts have found that server overload can constitute civil liability independent of CFAA and ToS. The practical threshold: any request rate that causes measurable performance degradation creates exposure. The 1 req/sec / 1,000 req/day public-interest default keeps you well inside the safety zone.

Proxy ethics addendum. Residential proxy networks that source IPs from compromised home computers sit in ethical gray territory — someone's machine is in your botnet. Data-center proxies are more detectable but ethically cleaner.

The migration decision tree:

- < 10K req/month: DIY is fine with respectful rate limits

- 10K–100K req/month: Engineering fragility starts exceeding API cost; evaluate the API-first approach

-

100K req/month: Unified API is cheaper on total cost (engineering + infra + legal)

For SocialCrawl's rate-limiting behavior and platform-specific limits, see /docs/api/rate-limiting.

When Should You Scrape vs. Use a Unified API?

If your pipeline is one-off research with a disclosed methodology and you're targeting Reddit, scrape it. If it's commercial, SLA-sensitive, multi-platform, or feeding an AI agent — the break-even with a commercial API is roughly week two of engineering time.

| Factor | DIY Scraping | Unified API (SocialCrawl) |

|---|---|---|

| Time to first result | 1–4 hours (one platform) | < 5 minutes |

| GDPR controller liability | You, alone | SocialCrawl maintains controller-role data lineage documentation and audit logs via /docs/compliance |

| Platform ToS breach risk | Yes — all 7 platforms prohibit it except Reddit | No — authorized access only |

| Engineering maintenance (12 months) | High — fingerprinting arms race, HTML drift, IP rotation | Low — SocialCrawl maintains |

| Schema consistency | Breaks with every platform update | Stable unified schema across 21 platforms, 108 endpoints |

| Cost at 100K req/month | $50K+/year (engineering) + ~$5K infra | ~£299/month (Pro tier) |

| Flexibility (custom fields) | 100% | ~80% (unified schema covers most production use cases) |

The 20% flexibility gap is real — if you need a very specific undocumented field from a niche platform endpoint, DIY gives it to you. For most commercial pipelines, the unified schema covers it.

If you're a startup or a data team that needs consistent profiles from 3+ platforms, the break-even with a unified API hits in about two weeks of engineering time. At 100K+ requests/month, it's not a close call.

For a breakdown of specific commercial tools, see the tool comparison. For the deeper concept of what a unified API actually normalizes, see the unified social media API guide. For pricing, see /pricing.

Case Law and Enforcement Updates: 2024–2026

Meta v. Voyager Labs (January 2023 filing): Meta filed suit alleging Voyager Labs created over 38,000 fake Facebook accounts to scrape publicly posted information from more than 600,000 users — posts, likes, photos, friend lists. Meta had previously disabled over 60,000 Voyager Labs-related accounts. This case is the paradigm of what does create CFAA liability: fake accounts plus non-public-to-the-actor data access. The case disposition had not been independently verified from primary sources at time of writing — treat the filing details as confirmed, the outcome as unresolved. (CNBC, January 12, 2023)

Meta v. Bright Data (January 2024): Federal judge ruled against Meta. Bright Data only violates ToS if Meta proves scraping occurred while logged in to a Meta account. Meta failed to prove this. Case reinforces the public-data principle. (Lection.app)

Clearview AI settlement (~2025): Clearview agreed to pay approximately $51 million and award plaintiffs 23% of company equity. The liability framework was biometric privacy (Illinois BIPA) — not CFAA, not ToS breach. This is a distinct risk vector from standard social scraping: the liability was unauthorized collection of biometric data without consent, not unauthorized access to platform content. (Lection.app)

TikTok ban pressure (2024–2025): CFIUS-driven national security pressure in the US created a live geopolitical risk factor on top of the technical risk. If TikTok is banned in the US, scraping it is moot. The EU is monitoring DSA compliance — TikTok's 169M EU MAU figure comes from its own DSA Article 24(2) transparency reporting. Scraping TikTok means accepting political risk as part of the infrastructure calculus.

GDPR enforcement trend: The enforcement trajectory is upward. Meta was fined €1.2B in 2023 for US data transfer violations. Scraping without documented lawful basis under GDPR Art. 6 and records under Art. 30 is a high-risk compliance gap in 2026. For updated regional guidance, see /docs/compliance.

EU AI Act (applicable from 2025+): High-risk AI systems using scraped data for profiling or decision-making may require conformity assessments. Specific obligations for scraped training data are still being implemented — "watch this space" is accurate for now.

Explore This Guide with AI

Five AI engines, one query — use these links to explore the legal landscape of social media scraping with an AI assistant.

Ask ChatGPT — Is social media scraping legal in 2026 under GDPR and CFAA?

Ask Perplexity — What did the hiQ v. LinkedIn case establish about scraping law?

Ask Claude — What are the GDPR requirements for a social media scraper?

Ask Gemini — Which social media platforms allow public data scraping in 2026?

Ask Grok — What is the rate-limit ethics framework for respectful scraping?

Frequently Asked Questions

Is social media scraping legal?

In the US, scraping publicly accessible social data is legal under the CFAA post-hiQ Labs v. LinkedIn (Ninth Circuit, 2022). The CFAA does not apply to data accessible without authentication. Platform Terms of Service create a separate civil liability — Instagram, LinkedIn, and TikTok explicitly ban it, creating contract breach exposure even without CFAA risk. GDPR (EU) and CCPA (California) apply to how you store and use the data regardless of collection legality. Outside the US, the CFAA question is irrelevant and local data-protection law governs entirely.

What is social media scraping?

Social media scraping is the automated extraction of public data — posts, profiles, follower counts, engagement metrics, comments — from social platforms without the platform's explicit permission. It uses web scrapers, bots, or headless browsers to send requests and parse responses, extracting structured data for analysis, research, or downstream systems. It differs from official APIs (authorized, rate-limited) and unified APIs (authorized, multi-platform, schema-normalized) in that it operates without platform permission.

How does social media scraping work?

Scrapers send HTTP requests to platform endpoints — or render pages in headless browsers for JavaScript-heavy platforms — parse the HTML or JSON response to locate structured fields, extract the data, then store or forward it. The process: request → render (if JS-required) → parse → extract → store. JavaScript-heavy platforms like Instagram and TikTok require Puppeteer or Playwright. Reddit and older Twitter endpoints work with plain HTTP calls. See the technical playbook section above for platform-specific guidance and code examples.

What is the social media scraping definition?

Social media scraping is automated data collection from public social platform pages without the platform's explicit authorization. It is distinct from official API integration (which is authorized) and from manual research (which is human-operated). It extracts structured data at scale — profiles, posts, engagement metrics, timestamps — for purposes including competitive analysis, brand monitoring, academic research, and AI training data. The "social" modifier distinguishes it from general web scraping by the nature of the target platforms.

What does social media scraping mean in a business context?

In business contexts, social media scraping refers to systematic extraction of competitor data, brand mentions, audience insights, or content performance metrics from social platforms. It is distinct from official API integration in that it bypasses platform authorization and rate limits — with the associated legal trade-offs. Commercial scraping at scale carries ToS breach risk on most platforms, GDPR exposure for EU resident data, and engineering costs that often exceed the cost of commercial data APIs at 100K+ requests/month.

Why is social media scraping risky?

Three risk types: Legal — ToS breach creates civil contract liability on most platforms, GDPR/CCPA violations carry fines up to €20M or 4% of annual revenue, and some jurisdictions have additional restrictions. Technical — IP blocking, fingerprint detection, and HTML drift break scrapers routinely; TikTok scrapers have a 4–6 week production half-life. Financial — scraping looks cheap upfront but engineering costs (maintenance, proxy rotation, headless browser infrastructure) compound quickly. At 100K+ requests/month, a commercial API is typically cheaper on total cost.

Which platforms are safest to scrape?

Reddit is the only major platform with Terms of Service that explicitly permit scraping of public content — it even provides public JSON feeds (/r/[subreddit].json). Twitter/X has an official API with a free Basic tier; its ToS explicitly prohibits scraping outside authorized API channels. Instagram, TikTok, and LinkedIn explicitly prohibit automated access and actively enforce. Facebook prohibits it but has historically been weaker on enforcement. See the 7×4 matrix above for the full breakdown. For zero-ambiguity access to all seven platforms, see the tool comparison.

What are the GDPR requirements for a social media scraper?

You need: (1) A lawful basis under Article 6 — usually "legitimate interests" with a documented balancing test, rarely "consent." (2) Records of Processing Activities (Article 30) — document categories of data subjects, data types, purposes, recipients, retention periods, and security measures. (3) Data minimization compliance — collecting only what you actually need. (4) A Data Processing Agreement with any third-party processors. These requirements apply to US-based scrapers processing EU residents' data — GDPR is extraterritorial. For a compliance checklist and DPA template, see /docs/compliance.

What happens if you get caught scraping a platform?

Consequences depend on platform and jurisdiction: IP blocks and account bans are universal and immediate. Civil lawsuit for ToS breach (contract damages, not criminal) — LinkedIn sued hiQ even after losing on CFAA grounds; the legal cost alone was the penalty. GDPR fines — up to €20M or 4% of global annual revenue in the EU. CFAA no longer applies to public data in the Ninth Circuit, but fake-account access remains a CFAA risk. The practical lesson from hiQ: even winning on the primary legal theory is expensive. LinkedIn's hiQ settlement cost $500K plus years of litigation and destroyed the company.

Where to Go From Here

The legal landscape for social media scraping narrowed measurably between 2022 and 2026 — the CFAA question is settled, but the GDPR, ToS, and enforcement questions are not. The hiQ ruling cleared one barrier; the settlement, the Voyager Labs filing, and the GDPR enforcement trend built three more.

For a breakdown of commercial APIs that handle the compliance layer, see the tool comparison or the unified API concept guide.

Related posts

How to Use Claude Agents: A Developer's Guide to Managed Agents, Sub-agents, and Real-time Data

A developer's guide to Claude Managed Agents, Claude Code sub-agents, and the Messages API. Setup in 4 steps, parallel execution, MCP, rate limits.

The OpenAI Assistants API in 2026: A Field Guide to the Shutdown, the Migration, and What Comes Next

OpenAI shuts down the Assistants API on 26 August 2026. A practical migration guide: Responses API, costs, frameworks, and the realistic timeline.

Browse AI Web Scraper: Where It Wins, and Where Social Data APIs Fit Better

Browse AI is great for web pages; social platforms need a specialist. Decision guide for developers choosing scrapers vs unified social-data APIs.